Privacy Equilibrium: Balancing Privacy Needs in Dynamic Multi-User Augmented Reality Scenarios

UIST 2025

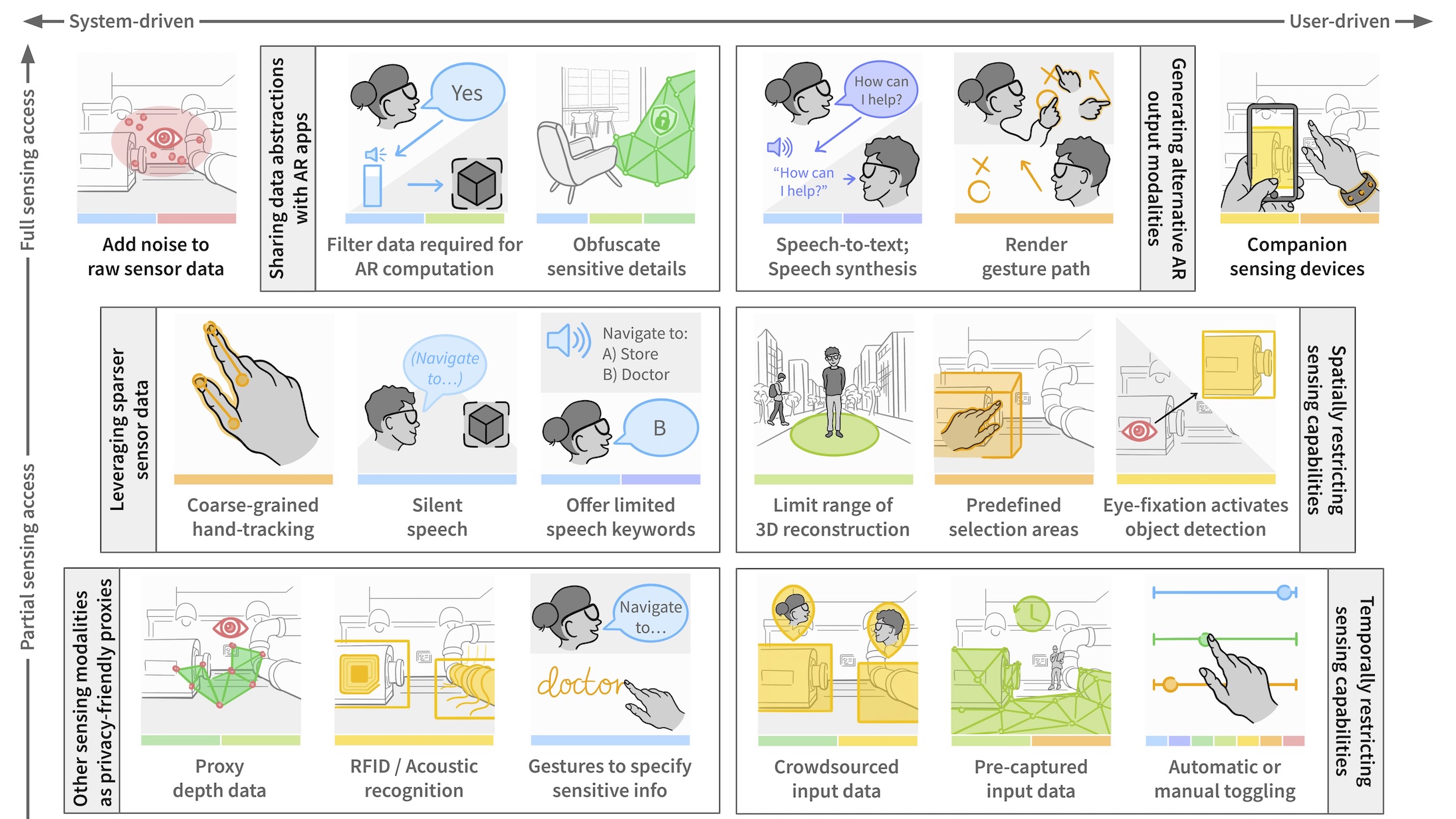

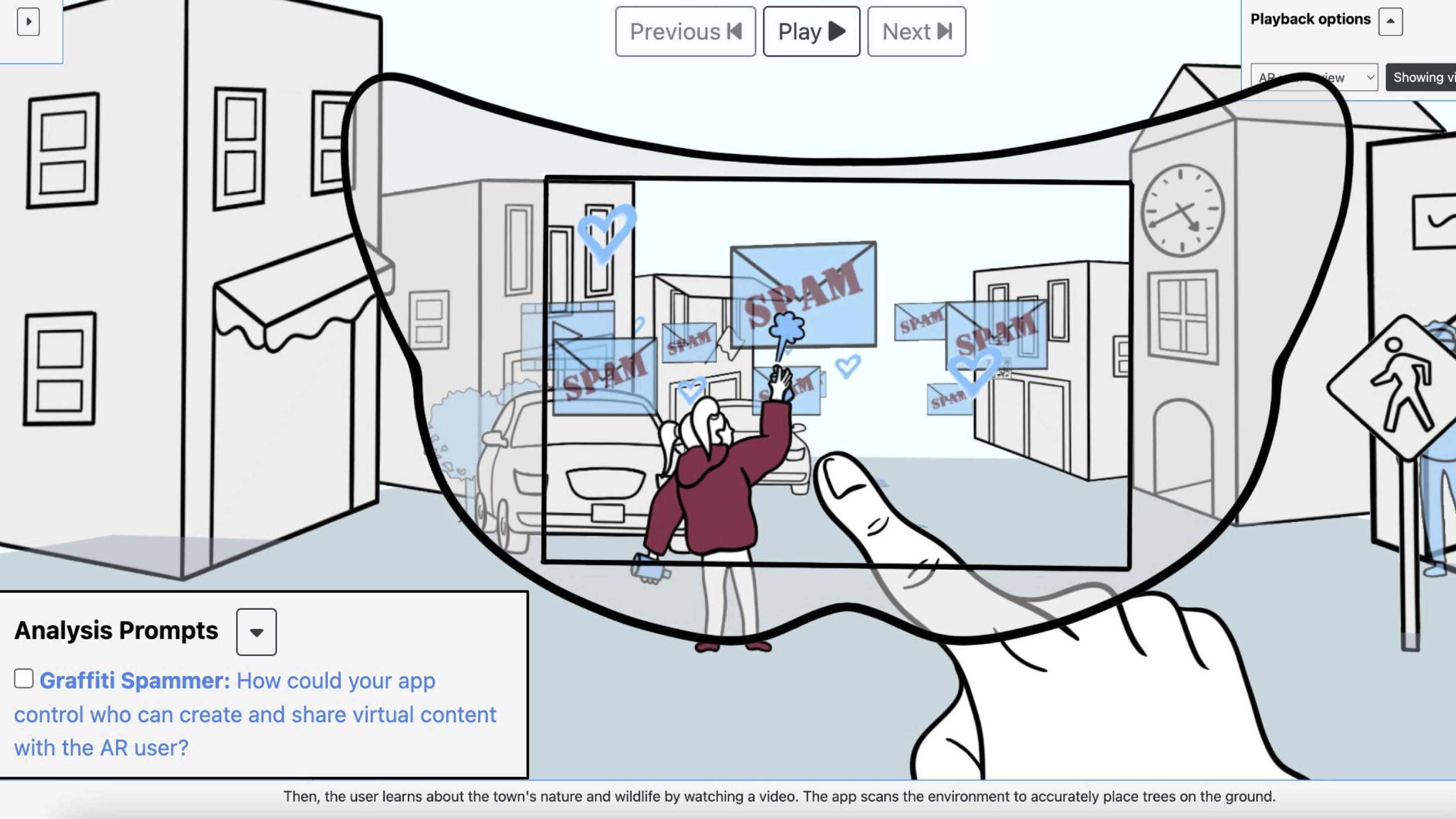

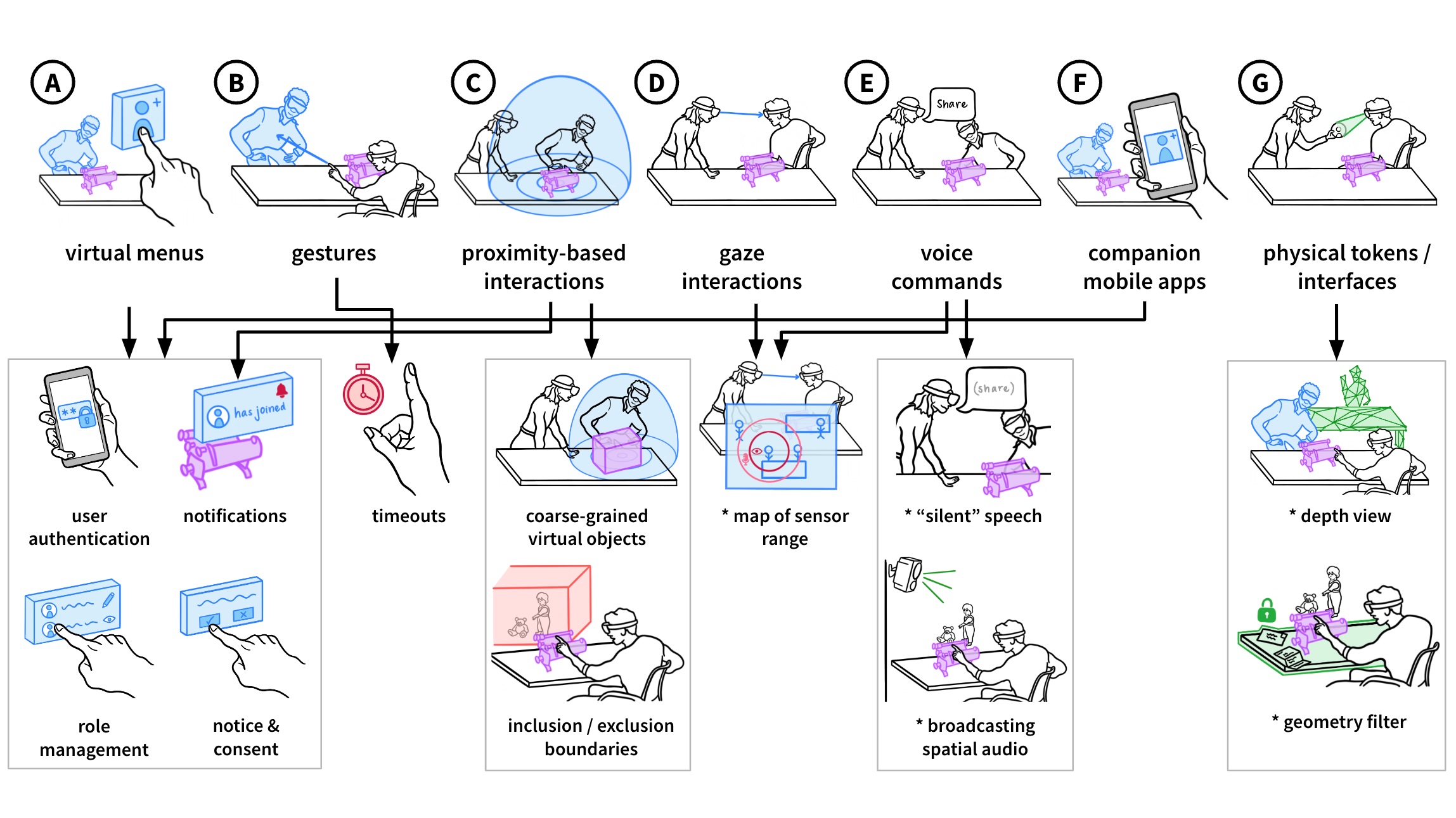

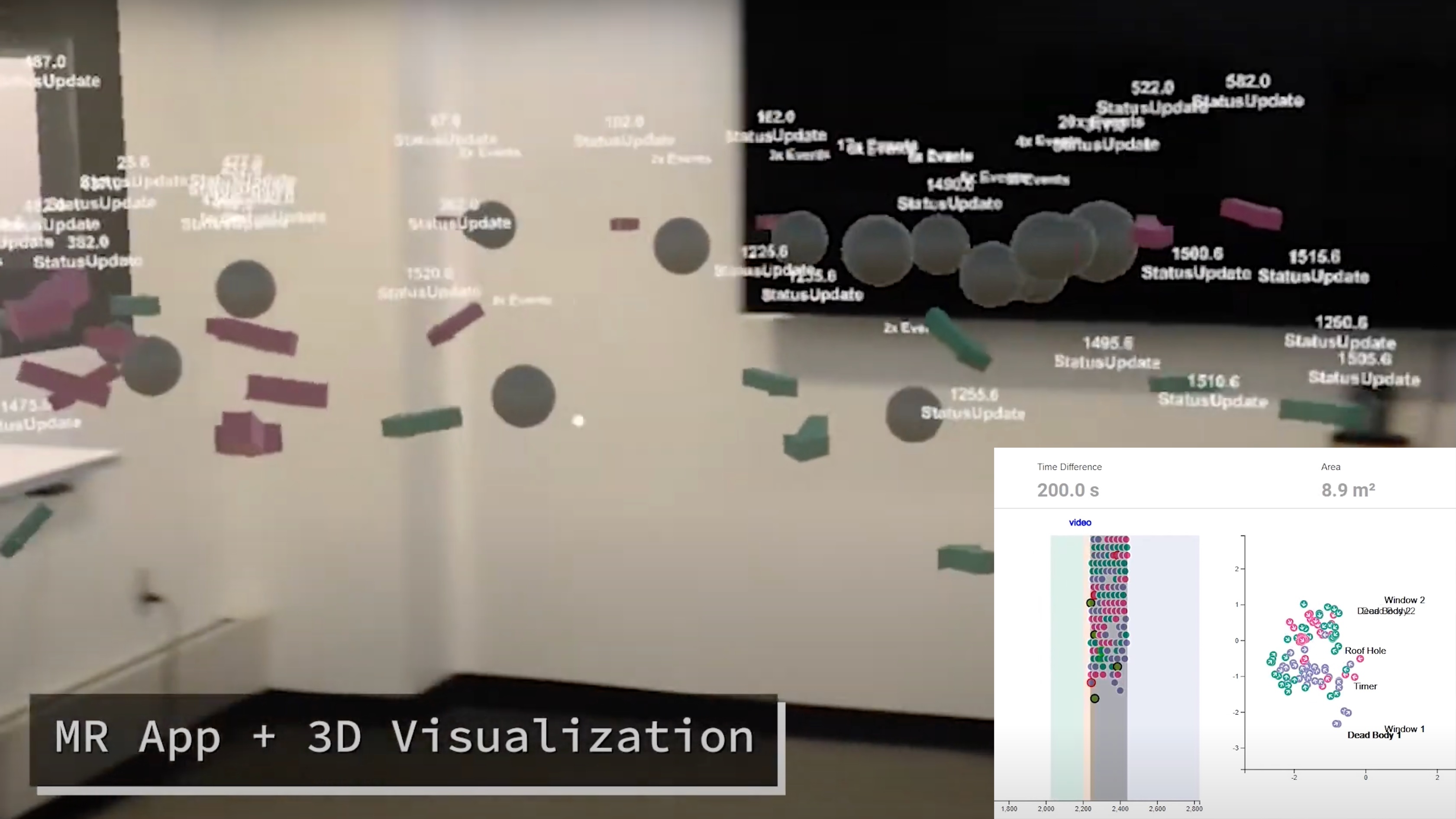

As we approach the everyday usage of AR, novel privacy concerns arise (e.g., environmental sensing techniques capturing sensitive physical areas or bystanders without their consent). To mitigate these risks, my PhD work establishes a foundation for adapting AR interactions to minimize exposure of sensitive data and guide the development of privacy-friendly AR interfaces.

UIST 2025

CHI 2025, Honorable Mention

UIST 2023

CHI 2023

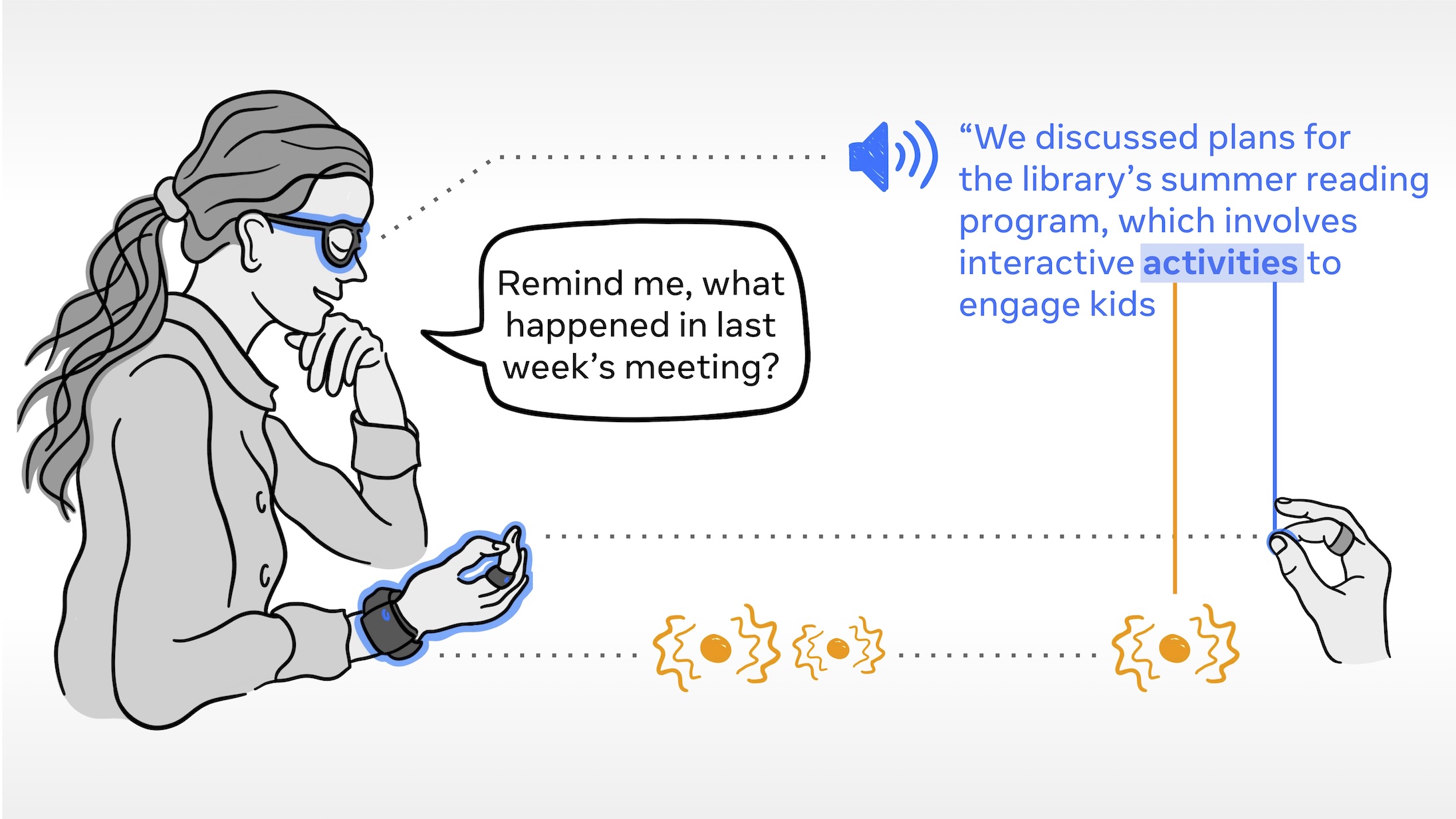

With wearable intelligent systems that support task continuity across settings (e.g., AR devices, AI-enabled smart-glasses), users’ goals and their perceptions of privacy risks can frequently evolve. Through internships and other projects, I developed interaction techniques that allow users to rapidly align context-aware systems to their goals.

CHI 2025

UIST 2024, Honorable Mention

UIST 2024

CHI 2022

CHI 2021

CHI 2020, Best Paper